"How accurate is your RAG system?" seems like a straightforward question, but accuracy alone tells only part of the story. A system that retrieves relevant documents but generates unfaithful answers is dangerous. One that produces factual responses based on irrelevant context is unreliable. Comprehensive evaluation requires measuring multiple dimensions of system behavior, and doing so in ways that drive meaningful improvement.

The evaluation challenge

RAG systems combine two distinct capabilities: information retrieval and language generation. Each can fail independently. Your retrieval might surface the perfect document while the LLM hallucinates an answer from its training data. Conversely, retrieval might miss crucial information while the LLM happens to know the answer from pre-training. Understanding which component fails, and why, is essential for targeted improvement.

The challenge deepens when you consider that "correctness" itself is multifaceted. Is the answer factually accurate? Is it grounded in the retrieved context? Does it actually address the user's intent? These are related but distinct questions, and a system might score well on one dimension while failing on others.

Retrieval metrics

Before evaluating generation quality, you need to understand whether your retrieval system surfaces the right information. The classic information retrieval metrics remain foundational, but their application to RAG contexts requires careful consideration.

Recall and precision at K

Recall@K measures what fraction of relevant documents appear in your top K results. Precision@K measures what fraction of your top K results are actually relevant. For most RAG applications, recall matters more than precision. The LLM can filter irrelevant context, but it cannot reason over documents it never sees.

The challenge lies in defining "relevant". In traditional search, relevance is binary: a document either answers the query or it does not. In RAG, relevance is more nuanced. A document might contain the answer, provide useful context, offer contradictory evidence, or be tangentially related. Your evaluation approach should reflect these distinctions.

Mean Reciprocal Rank (MRR)

MRR measures how early the first relevant document appears in your results. It equals 1/rank for the highest-ranked relevant document, averaged across queries. High MRR indicates that relevant information appears near the top, which matters because context window limitations mean the LLM may not see documents ranked lower.

Normalized Discounted Cumulative Gain (NDCG)

When relevance is not binary, NDCG provides a more nuanced view. It assigns higher scores when highly relevant documents appear earlier in the results, with a logarithmic discount for lower positions. This is particularly useful when documents have varying degrees of relevance to the query.

Practical tip: Creating evaluation datasets

Building a labeled evaluation dataset is often the most time-consuming part of RAG evaluation. Start with a modest set of 50-100 diverse queries, carefully labeled by domain experts. Quality matters more than quantity. As your system evolves, expand this dataset to cover failure cases you discover in production. Consider using stratified sampling to ensure coverage of different query types, document types, and difficulty levels.

Generation metrics

Once retrieval surfaces the right documents, you need to evaluate whether the LLM generates appropriate responses. This is where evaluation becomes particularly challenging, as you are assessing natural language output that may be correct in different ways.

Faithfulness

Faithfulness measures whether the generated answer is supported by the retrieved context. A faithful answer does not introduce claims that cannot be traced back to the source documents. This is distinct from accuracy: an answer can be accurate (matching ground truth) but unfaithful (including information not in the context).

Evaluating faithfulness typically requires either human annotation or LLM-based evaluation. The latter approach uses a separate LLM to assess whether each claim in the answer can be verified from the context. While not perfect, this provides scalable approximations of human judgment.

Answer relevance

A faithful answer is not necessarily a useful one. Answer relevance measures whether the response actually addresses the user's question. A system might faithfully summarize retrieved documents without answering what was asked. Evaluating relevance requires understanding user intent, which often requires human judgment or carefully designed LLM-based evaluation prompts.

Factual correctness

When you have ground-truth answers available, you can measure factual correctness directly. This might use exact match for factoid questions, F1 scores for longer answers, or semantic similarity metrics for open-ended responses. Be cautious about relying solely on automatic metrics here; correct answers can be phrased in many ways that surface metrics might not recognize.

End-to-end metrics

Component-level metrics help diagnose issues, but end-to-end metrics reflect the user experience. These holistic measures capture how well the entire system performs its intended function.

Answer rate

What percentage of queries receive a substantive answer versus an abstention or "I don't know" response? A system that never answers is useless; one that always answers (even when it should not) is unreliable. Track this rate across different query types to understand where your system is confident versus uncertain.

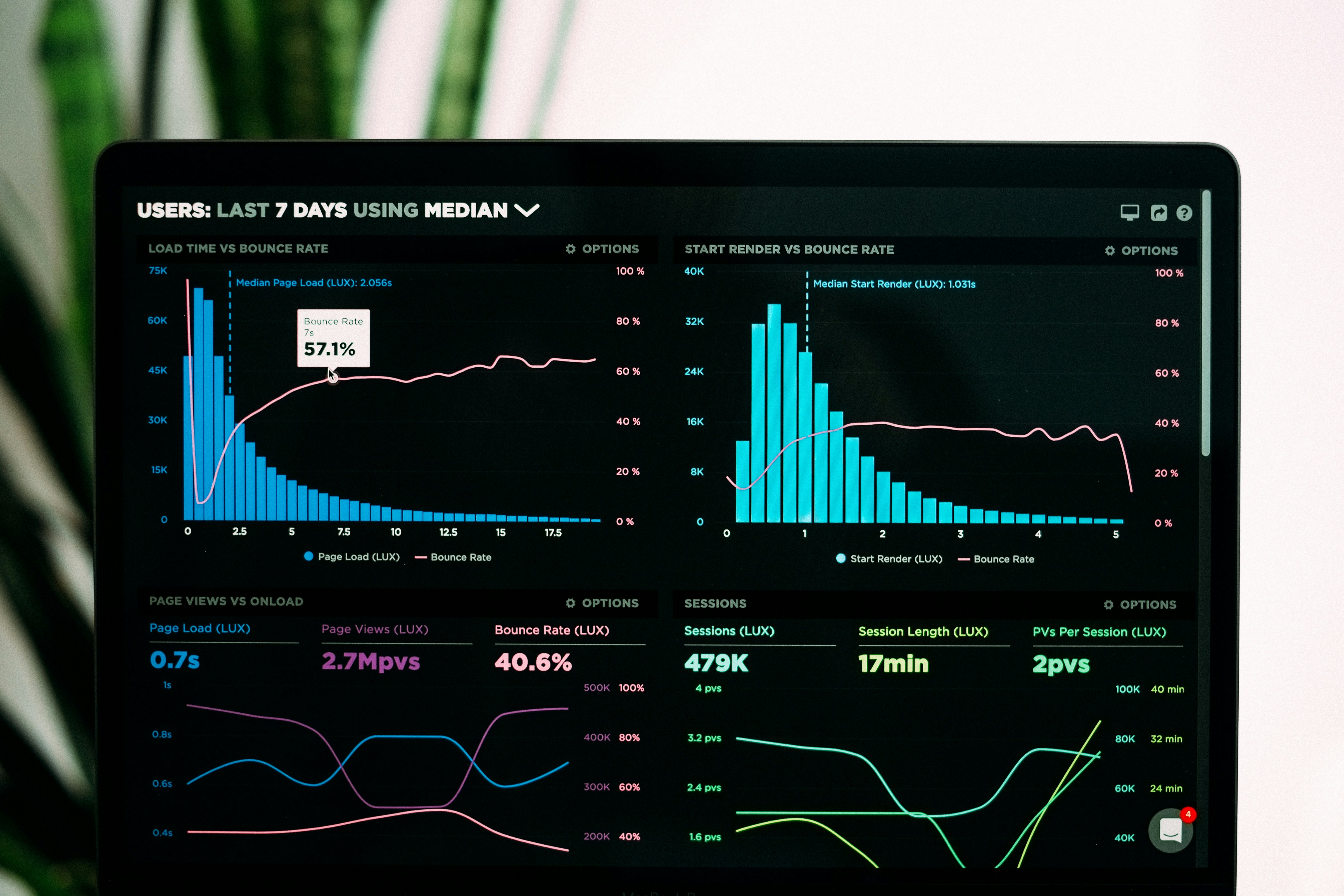

Latency

User experience depends heavily on response time. Measure p50 (median), p90, and p99 latencies separately. A system with good median latency but terrible tail latency will frustrate users. Break down latency by component (embedding, retrieval, generation) to identify bottlenecks.

Cost per query

For on-premise deployments, this translates to compute utilization. For cloud-based LLMs, it is direct API cost. Understanding cost per query helps you balance quality against operational constraints and make informed decisions about model selection and context window sizing.

LLM-based evaluation

Manual evaluation does not scale, and traditional NLP metrics like BLEU and ROUGE correlate poorly with human judgment for generative tasks. LLM-based evaluation has emerged as a practical middle ground: using one LLM to evaluate another.

Design considerations

Effective LLM-based evaluation requires careful prompt engineering. The evaluation prompt should clearly define the criteria being assessed, provide examples of good and poor responses, and specify the output format (typically a score with reasoning). Using a stronger model as the evaluator generally produces more reliable results.

Be aware of known biases in LLM evaluation. Models tend to prefer longer responses, favor their own outputs, and are sensitive to the order in which options are presented. Design your evaluation protocol to mitigate these biases through randomization and calibration against human judgments.

Evaluation frameworks

Several open-source frameworks can accelerate your evaluation pipeline development:

- RAGAS: Provides metrics for faithfulness, answer relevance, context relevance, and context recall with LLM-based evaluation.

- DeepEval: Offers a comprehensive suite of metrics with customizable evaluation criteria.

- TruLens: Focuses on tracking and evaluating LLM applications with built-in RAG metrics.

- LangSmith: Provides evaluation features alongside tracing and debugging for LangChain applications.

Building an evaluation pipeline

Effective evaluation is not a one-time activity but an ongoing process integrated into your development workflow. A well-designed evaluation pipeline catches regressions before they reach production and provides data for informed decision-making.

Offline evaluation

Run comprehensive evaluations against your test dataset before deploying changes. This should include retrieval metrics, generation quality metrics, and regression tests for known failure cases. Automate this as part of your CI/CD pipeline to prevent quality degradation.

Online evaluation

Production behavior often differs from test conditions. Implement lightweight online evaluation that samples production queries and assesses response quality. Track metrics over time to detect gradual drift or sudden degradation. Consider collecting implicit feedback signals like follow-up queries or reformulations.

Human evaluation

Periodic human evaluation remains essential for calibrating automated metrics and catching issues that automated evaluation misses. Design annotation guidelines that are specific enough to be consistent but flexible enough to capture nuanced judgments. Use multiple annotators and measure inter-annotator agreement to ensure reliability.

From metrics to improvement

Metrics are only valuable if they drive action. Establish clear ownership for metric targets and create feedback loops that translate metric movements into engineering priorities.

Error analysis

When metrics indicate problems, systematic error analysis reveals root causes. Categorize failures by type: retrieval failures (relevant documents not found), generation failures (poor answers despite good context), or scope failures (queries outside system capabilities). Each category requires different interventions.

Targeted improvements

Use error analysis to prioritize improvements. Low recall might indicate chunking problems or embedding limitations. Low faithfulness might suggest prompt engineering opportunities or the need for more constrained generation. Track how changes affect specific failure categories, not just aggregate metrics.

Recommendations

- 1.Start with retrieval metrics. If retrieval fails, generation cannot succeed. Invest in a labeled dataset that measures recall and precision for your specific domain.

- 2.Measure faithfulness explicitly. This is the most dangerous failure mode in RAG: confidently wrong answers that appear plausible. Prioritize this metric over raw accuracy.

- 3.Calibrate automated metrics against human judgment. Periodically validate that your automated metrics correlate with what humans care about.

- 4.Track metrics at multiple granularities. Aggregate numbers hide important patterns. Segment by query type, document source, and user segment.

- 5.Build evaluation into your workflow. Automated evaluation on every change prevents regressions and builds confidence in deployments.

Effective evaluation is what separates demo-quality RAG systems from production-ready ones. The investment in comprehensive metrics pays dividends through faster iteration, fewer production incidents, and justified confidence in system quality. In regulated environments especially, the ability to demonstrate and document system performance is not optional but essential.

Need help evaluating your RAG system?

We help organizations establish comprehensive evaluation frameworks that measure what matters and drive continuous improvement. From initial metric selection to building automated evaluation pipelines.